This post is also available in: Italian

Reading Time: 8 minutesOne of the biggest new in the new VMware vSphere 6.0 suite are probably Virtual Volumes, fundamental part of the VMware’s SDS vision.

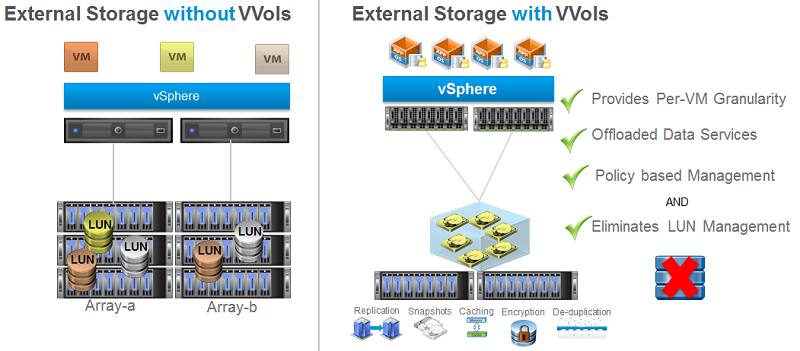

What are vSphere Virtual Volumes (or VVols)? They are an integration and management framework to convert external storage in VM-aware storage.

From my point of view is the biggest news of this release (and probably the most announced, during the previous VMworld… first tech preview was in 2012!) and can really change the storage part of a virtualized design, included how it’s design.

But, much important, could be a great opportunity also for storage vendors to bring some interesting features to their products and help them to differentiate each other by providing much native and VM centric features (to be honest, some of them already have this approach)

For sure is a good deal both for VMware and storage vendors and demonstrate that VMware does not necessary replace storage functions or make them dummy. Yes, it can provide several interesting functions, but storage side features are still important!

But also note that storage vendors can implement different features in different way, so does not just have a look at the different check-list but learn more on each different solutions (and read also this post).

Big changes in storage world using Virtual Volumes

Using VVols you can avoid the complexity of block based storage related to LUN creation, LUN mapping (or masking), LUN sizing, … Each virtual disk could be natively represented on arrays and it will support existing storage I/O protocols (FC, iSCSI, NFS).

In this way VVols enable VM granular storage operations using array-based data services, but also will extend vSphere Storage Policy-Based to storage ecosystem in order to have a “unified” SDS approach all VM-centric and policy driven.

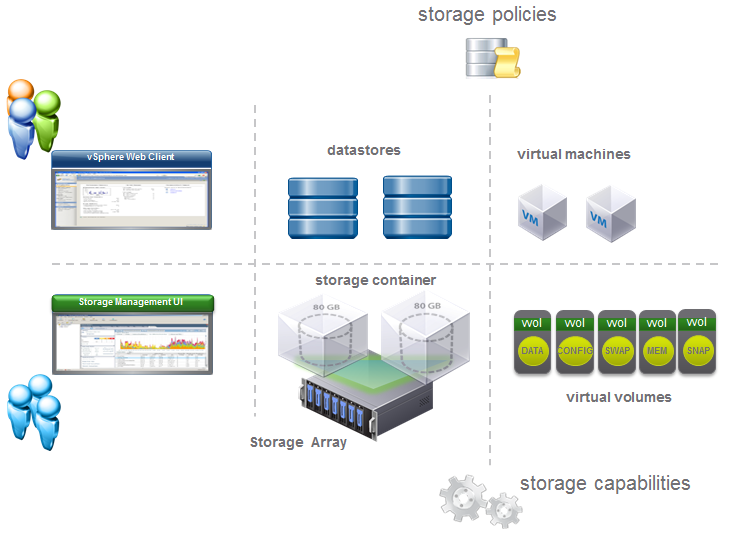

Also it provide a better visibility both for virtualization and storage admins:

Or can make virtualization admin completely autonomous on storage provisioning (after that VVols have been configured).

Or can make virtualization admin completely autonomous on storage provisioning (after that VVols have been configured).

Much important, it can virtualize both SAN and NAS devices making then like an object storage (where the objects are the VM files) and it’s included with vSphere (from the Standard edition!).

How does it work?

There are some important concept around this technology:

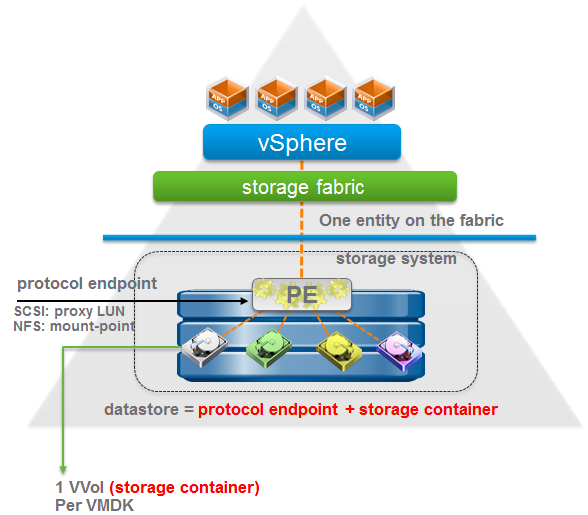

- No FileSystem: VVols does not require a VMFS datastore (for block oriented storage)

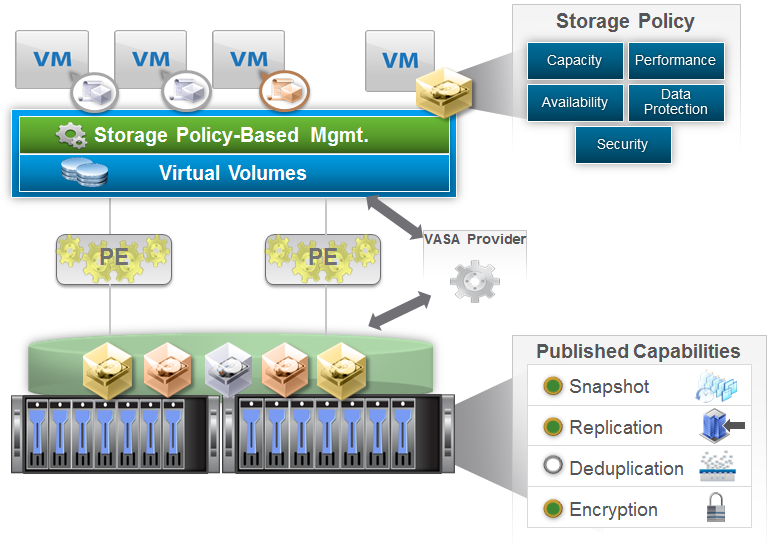

- ESXi host manages Array through VASA (vSphere APIs for Storage Awareness) APIs that is a core component (note that VASA 2.0 it’s now available in Standard edition)

- Arrays are logically partitioned into containers, called Storage Containers

- VM disks, called Virtual Volumes, stored natively on the Storage Containers

- IO from ESXi to array is addressed through an access point called, Protocol Endpoint (PE)

- Data Services are offloaded to the array

- Managed through storage policy-based management framework

To better understand each new term and part of this technology let’s analyze each of them.

To better understand each new term and part of this technology let’s analyze each of them.

Vendor Provider (VP)

The Vendor Provider part it’s a software component (usually a plug-in) developed by Storage Array Vendors. This plug-in uses a set of out-of-band management APIs. The VP is able to export storage array capabilities and present them to vSphere through the VASA (2.0) APIs. Note that a VASA Provider can be implemented within the array’s management server or directly on storage firmware, but this depend by the storage vendor.

Add a new Vendor Provider it’s like add a VASA 1.0 provider and a single VASA Provider can manager multiple arrays.

Protocol Endpoints (PE)

Protocol Endpoints are a transport mechanism or access points that enables communication between ESXi hosts and storage array systems. They are part of the physical storage fabric, and they establish a data path on demand from VMs to their respective virtual volumes and they must be created by storage admins.

Protocol Endpoints are compatible with all SAN and NAS protocols:

- iSCSI

- NFS

- FC

- FCoE

Also they can manage all storage access paths and policies:

- Multi-path policies

- NFS topologies

There are some differences between Protocol Endpoints and VMware datastores:

- PEs no longer stores VMDKs but it only becomes the access point.

- Now you wont need as many datastores or mount point as before

Storage Container (SC)

Storage containers are equivalent to datastores in a sense, but they are focused around the allocation of chunks of physical storage. It’s a logical storage constructs for grouping of virtual volumes based on the grouping of VMDKs onto which application specific SLAs are translated to capabilities through the VASA APIs.

Again, like Protocol Endpoints, a Storage Container must be created by storage admins. A single Storage Container can be simultaneously accessed via multiple Protocol Endpoints.

There are some similarity between Storage Container and VMware datastores:

- Capacity is based on physical storage capacity

- Logically partition or isolate VMs with diverse storage needs and requirement

- Minimum one storage container per array

- Maximum depends on the array

Virtual Volumes (VVols)

VVOLs are stored in storage containers and mapped to virtual machine files/objects such as VM swap, VMDKs and their derivatives. There are five different types of recognized Virtual Volumes:

- Data – Equivalent to VMDKs

- Config – VM Home, configuration related files, logs, etc

- Memory – Snapshots

- Swap – VM memory swap

- Other – Generic type for solution specific

Provisioning of VVols will be consistent between vSphere and storage side:

- vSphere side: operations are translated into VASA API calls in order to create the individual virtual volumes

- Storage side: operations are offloaded to the array for the creation of virtual volumes on the storage container that match the capabilities defined in the VM Storage Policies

This kind of granularity will permit several interesting features at storage side (and each storage vendor can try to bring more innovation of this kind of features), potentially is possible provide features like Storage QoS at VM level, VM replication, a better thin provisioning with also a reclain at guest level, …

And also VM snapshot can really change!

Snapshots

Snapshots are a point in time copy on write image of a Virtual Volume with a different ID from the original. Of course snapshots are useful in the contexts like creating a quiesced copy for backup or archival purposes, creating a test and rollback environment for applications, instantly provisioning application images, and so on…

Using Virtual Volumes, two different type of snapshots supported:

- Managed Snapshot: managed by vSphere (aka similar traditional VMware snapshots) with a maximum of 32 snapshot are supported for fast clones

- Unmanaged Snapshot: manage by the storage array with a maximum snapshot dictated by the storage array

Binding

Bindings are data path coordinating mechanisms that occurs between VASA providers and ESXi hosts for accessing virtual volume. There are different Binding Mechanism:

- Binding – allows array create I/O channels for a virtual volume

- Unbind – destroys the I/O channel for a virtual volume to a given ESXi host

- Rebind – provides the ability to change the I/O channel (PE) for a given virtual volumes run time using events.

Storage Policy Based Management (SPBM)

Like other VMware technologies (for example Virtual SAN) all can be defined by storage policy at VMs level.

VM policies are a component of the vSphere Storage Policy-based management framework (SPBM) and can match storage array capabilities. Storage array capabilites are array based features and data services specifications that capture storage requirements that can be satisfied by a storage arrays advertised as capabilities. Storage capabilities define what an array can offer to storage containers as opposed to what the VM requires.

Storage Capabilities and their definitions can be advertised to SPBM from different sources:

- Vendor defined: vendor specific capabilities, advertises to vSphere through the Vendor Provider and VASA APIs

- End User defined: manually defined tags and categories

VAAI vs VVol

Certain offloading operation will be done via VASA and other will be done using the standard protocol commands. For more information see those posts: Virtual Volumes (VVols) vSphere APIs & Cloning Operation Scenarios and vSphere Virtual Volumes Interoperability: VAAI APIs vs VVOLs.