With the announce of the new vSphere 6.7 Update 1 you should expect also a new version of VMware vSAN (the bits are included in vSphere) and that’s it: vSAN 6.7 Update 1 (strange that has not been used the name vSAN 6.8).

VMware vSAN has grown very fast in those years, not only in the number of versions (quite impressive) but also in the customers’ adoption, with more than 14000 organizations using vSAN and with a 110% growth year-over-year.

VMware declares also a 37% market share of HCI market, according to with IDC’s 1Q2018 Worldwide Quarterly Converged Systems Tracker, June 2018. If it is true, is a very huge number, but would be interesting to see how is calculated (on the number of nodes, or the number of clusters, only production cluster?).

Anyway now it’s the turn of vSAN 6.7 Update 1 with some improvements and news:

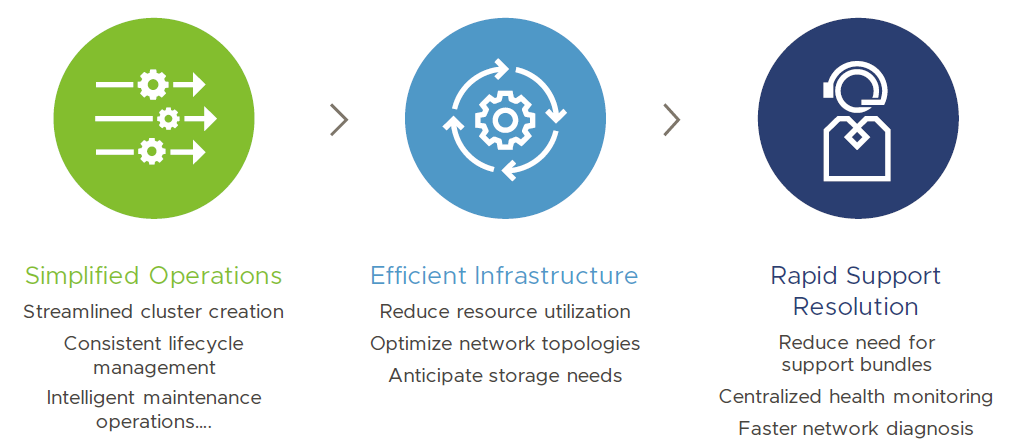

Manageability has improved in different ways.

Manageability has improved in different ways.

First thanks to the new vSphere Client (that now finally fully features and mature) is possible manage a vSAN environment with a fast and effective UI (but of course you can also do with the CLI). The new “Quickstart” guided cluster creation can help in build new vSAN cluster, but also during the extension or upgrade of vSAN cluster bt configuring vSAN, HA, DRS, ECV, NTP and other settings on all nodes.

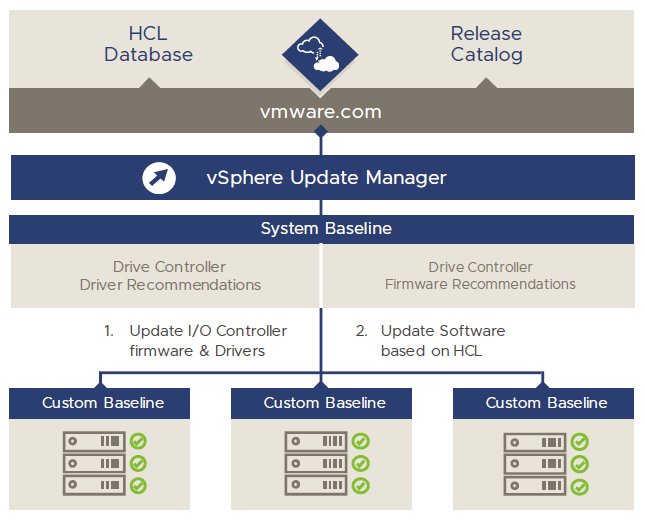

Also, VMware Update Manager (VUM) has been integrated into the vSphere Client starting with vSphere 6.7, and now is even more powerful for vSAN: ESXi, drivers, and firmware updates are all now centralized through VUM.

Also, it supports custom vendor ISOs for OEM specific builds and drivers. And (but this was partially already existing in vSAN 6.5 and 6.6) some HBA firmware, like Dell HBA330

updates.

Should be nice have also disk firmware check and update/upgrade management, but seems not planned in this release.

Should be nice have also disk firmware check and update/upgrade management, but seems not planned in this release.

And finally VUM now supports vCenter without internet connectivity… yes was possible use proxy HTTP, but there are so many isolated environments in the world (for different reasons) and this feature is close to be mandatory for a storage product.

Storage analysis and report it’s also much more clear with an improved Insight with vSAN cluster capacity, like adding

- Historical capacity reports – Can report Total, used, and free capacity over time but also consider the history of deduplication and compression ratios

- Usable capacity estimator – Display usable capacity based on selected storage policy and can easily estimate of “what if” when using space-efficient RAID-5/6

And CLI finally is unified to PowerCLI with new cmdlets replacing 18 RVC commands. In my opinion, RVC was something totally complex and confusing: too much CLI, too many options, too many different ways to perform CLI based operations. Focusing much on PowerCLI can help new users to have one single script tool. Note that most cmdlet requires the new PowerCLI version 10.2.0.

For the space optimization, finally now vSAN support storage reclamation: the previous versions of vSAN wasn’t able to use TRIM/UNMAP command from guest OS (as demonstrated by Cormac Hogan in his post). Now is possible reclaimed guest OS capacity and initially the supported guest OSes are:

- Windows Server 2012 or Windows 8 and newer

- Linux supporting ext4, xfs, btrfs, etc.

Very good considering that vSAN provisioning is by default a form of thin provisioning and that guest OS space reclaim was already available in VMFS6.

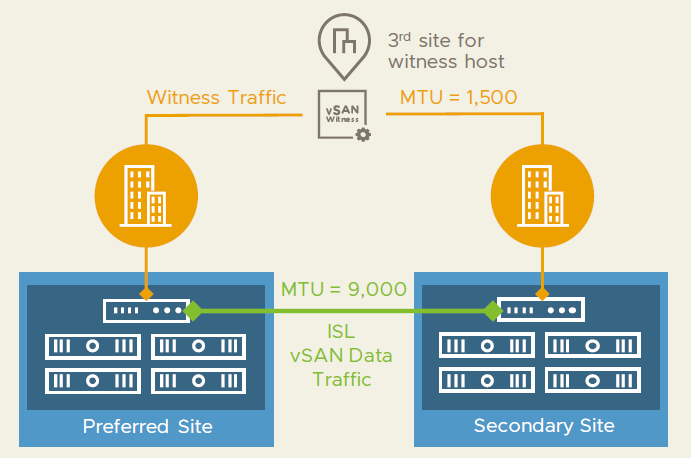

For vSAN Stretched Cluster there are some important news like mixed MTU size support for stretched clusters with witness traffic separation, this to make more and more the witness site a site with low requirements (or at least different requirements). I hope to see the same also for vCenter HA, but until now the witness node has still too much network requirements.

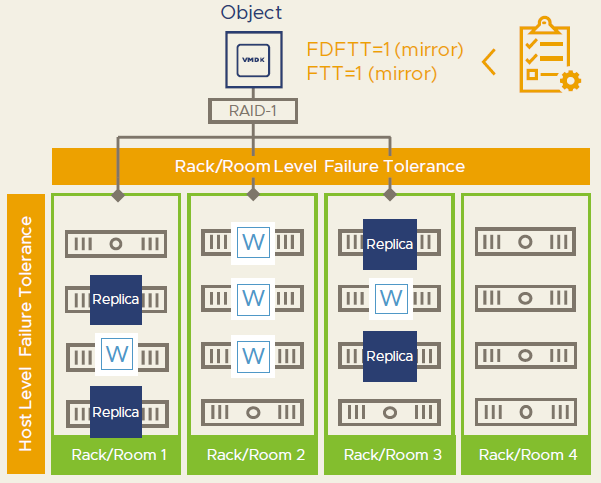

Another important feature (or capability extension) is the secondary levels of protection for non-Stretched Clusters that can protect for rack/floor failure and node failure simultaneously, if rack/floor are grouped in different “Fault Domain” (a feature already available from vSAN 6.0). The local redundancy can be applied with storage policies using “Fault Domain Failures to Tolerate” FDFTT setting.

In my opinion, this option is very interesting can make Stretched cluster less interesting (in some cases), moving to a new type of “Campus Cluster” where you have multiple sites (in the same campus) each active and not necessary only three site.

Unfortunately, still, there isn’t an option to have a secondary redundancy for 2-nodes vSAN cluster configuration.

That’s all? No… there will be more in the future roadmap, like:

- Native data protection solution – an interesting option, but I would like to see also a native replication solution without vSphere Replication.

- File services – This could be cool, depending on how will be implemented

- AWS EBS Support – Probably only for vSphere on AWS

In few years VSAN has become an interesting solution:

- vSAN 5.5: First version of the product

- vSAN 6.0 (March 2015): add All Flash Array (AFA), 64 nodes as cluster size and more than 2x Hybrid Speed, Fault Domains

- vSAN 6.1 (September 2015): add stretched cluster and 2-nodes ROBO scenarios

- vSAN 6.2 (March 2016): add deduplication and compression (only for AFA) and quality of service

- vSAN 6.5 (October 2016): add iSCSI Access, 2-nodes direct connect and management improvements

- vSAN 6.6 (April 2017): add vSAN encryption, Local Protection for Stretched Clusters, no more multicast dependency, vSAN Config Assist / Firmware Update

- vSAN 6.7 (April 2018): vSphere Client support, guest clustering with iSCSI, faster destaging.