With the new Windows Server 2016 Technical Preview 2 there are more features as described in a previous post. Some are also releated to the storage part of Windows Server platform where starting with Windows Server 2012, Microsoft has introduced lot of features including SMB3, de-duplication, a new filesystem for huge volumes (ReFS), storage spaces and the Scale-Out File Server solution. And with Windows Server 2012 R2 also a multi-tiering.

With Windows Server Server 2016 are added Storage Replica (to build, for example stretched cluster), an improved storage QoS, a better deduplication engine and a completly new Storage Spaces Direct (S2D).

The last one is the more interesting because it makes the Scale-Out File Server a real scale-out architecture.

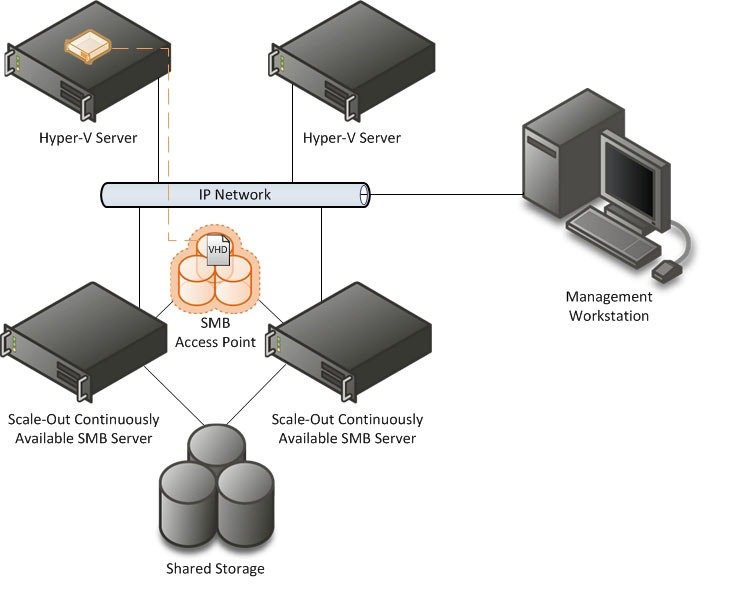

In Windows Server 2012 or 2012 R2 the Scale-Out File Server solution is a really scalable (with multi-active nodes) file server with SMB3 protocol, but was not exactly a scale-out architecture, at least NOT for the storage space part (see also this post):

The (big) limit is the you still need an hardware part to provided shared storage (in SAS JBOD mode) for the storage space part. Then the CSV and the file server where effectively a truly scale-out architecture, but the disk part was just a shared bunch of disks.

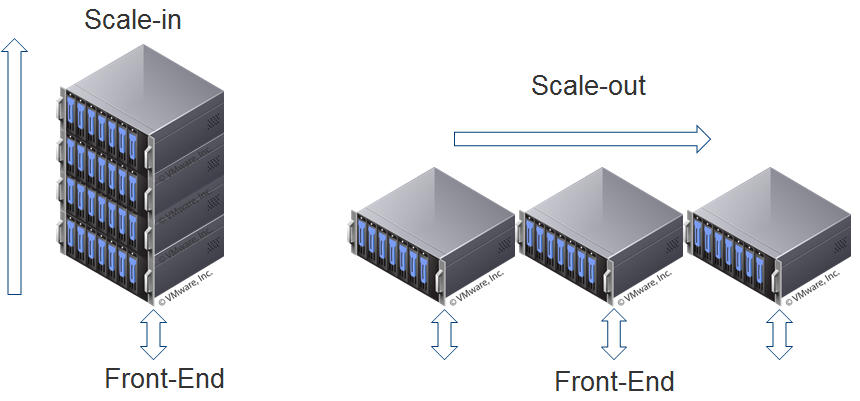

But in storage world, a scale-out architecture it’s usually a solution build with multiple arrays (in this case each array it’s a server node), without shared storage, with a single logical view (but with the power of all of them, both in capacity and in performance).

There real world is, of course, little more complicated and there are also other approaches.

But how things change with the new Storage Spaces Direct in Windows Server Technical Preview?

With Windows Server Technical Preview Storage Spaces Direct, you can now build a scale-out and HA Storage Systems using storage nodes with only local storage!

You can use servers with disk devices that are internal to each storage node:

Or servers with disk devices in JBODs where each JBOD is only connected to a single storage node:

This completely eliminates the need for a shared SAS fabric and its complexities, but also enables using devices such as SATA disks, which can help further reduce cost or improve performance. The diagram below illustrates a “Storage Spaces Direct” deployment.

Requirements are not yet clear at this phase: for sure you need Windows Server 2016 (at least TP2), local storage (local controllers specification? Only SAS and JBOD or also RAID0?), a back-end network for data replication (10 Gbps or 40 Gbps speed? Only RMDA?)… All those aspect will be clariefied in the RC or the GA of Window Server 2016.

But it’s now confirmed that Storage Spaces Direct uses ReFS as the file system of choice because of scale, integrity and resiliency.

Storage Spaces Direct seamlessly integrates with the features you know today that make up the Windows Server software defined storage stack, including Scale-Out File Server, Clustered Shared Volume File System (CSVFS), Storage Spaces and Failover Clustering:

See also:

- Storage scale-up vs. scale-out

- Other interesting new features of Windows Server 2016

- Ignite 2015 – Storage Spaces Direct (S2D)

- Testing Storage Spaces Direct using Windows Server 2016 virtual machines

- Could Microsoft become a storage vendor?

- Windows Scale Out File Server (SOFS) is not exactly a scale-out storage