During the third day of the third edition of Virtualization Field Day (#VFD3) the last company that we (as delegates) met was VMTurbo that is a well known company in the big virtualization ecosystem.

During the third day of the third edition of Virtualization Field Day (#VFD3) the last company that we (as delegates) met was VMTurbo that is a well known company in the big virtualization ecosystem.

The core founding team started VMTurbo in 2009 (the initial company was called SMARTS but was soon acquired by EMC), realizing that the rapid growth of virtualization in the datacenter was redefining the management requirements for companies of all sizes. The VMTurbo platform first launched in August 2010 and since that time more than 9,000+ cloud service providers and enterprises worldwide have deployed the platform with 530+ customers. VMTurbo is backed by Bain Capital Ventures, Highland Capital Partners, and Globespan Capital. The company is headquartered in Massachusetts, with offices in New York, California, the United Kingdom and Israel.

During this session (mostly handled by VMTurbo President Shmuel Kliger) we have listen about the VMTurbo Operations Manager products and how it can ensure that applications get the resources they need to operate reliably, while utilizing infrastructure and human resources in the most efficient way. Some months ago, I’ve already write a post about this product when VMTurbo announce Operations Manager 4.0.

Despite the name, this product is not something like VMware Operations Manager or other similar tools (in fact it could works with it and complete it), or other similar tools: VMTurbo delivers an Intelligent Workload Management solution for cloud and enterprise virtualization environments – with the goal of helping customers get the most out of – and continue to expand – their virtualization deployment. VMTurbo also uses an economic scheduling engine to dynamically adjust resource allocation to meet business goals.

This mean that this product is most like a resource broker tool (or a cloud broker when used with cloud resource) in order to support in the best decision of where place the resources according with the underline resources cost and utilization.

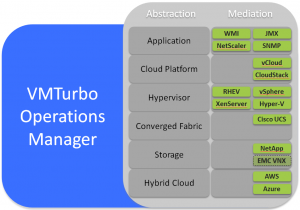

It’s a Software-Driven Control usable for the Software-Defined Data Center (but not only) in oder to transforming IT operation. One instance of the software to manage as you want, using different control modules:

This control plane spans from Applications through to the hypervisor compute and related logical storage and network presentation. It also extends to the storage layer “beneath the virtualization layer” – driving resource allocation decisions in the underlying physical and logical storage constructs such as storage volumes, aggregates, controllers and filers.

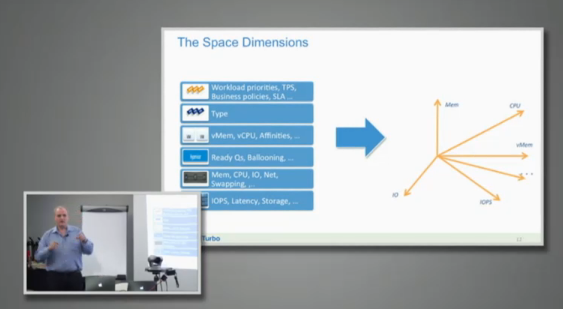

More important it can go deep in each single abstraction layer (also with several mediation modules) in order to gain the required information on all of the resources in your clusters, including compute, storage, network, memory, … building a multi-dimensional space:

More data could be gain, not only from the virtual world, but also from the physical world using different extentions:

- Storage Extension—Understands the interdependencies between the virtualization and storage layers to address workload performance and efficiency that are more often the result of issues at the storage layer

- Application Extension—Instruments at the application layer and integrates with application delivery controllers to intelligently prioritize resource allocation based on application priority and performance—scaling load-balanced application farms up or down as demand fluctuates

- Hybrid Cloud Extension—Provides specific guidance regarding the “what, when and where” of running workloads in private, public and hybrid cloud infrastructure

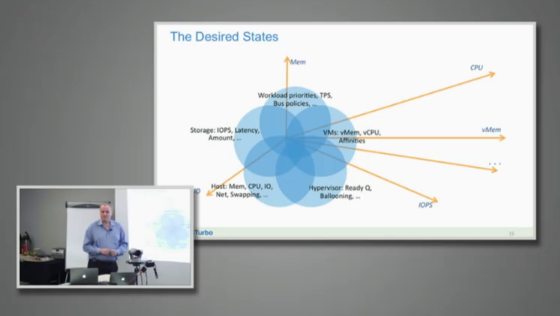

With all this data the tool will find a desiderd state according also with the financial parameters:

All those recommendations will be quite similar to how the stock market works: a marketplace of buyers and seller. It can answer at the question: what workload when where.

But more important could handle different type of enviroment and for example suggest the placement across different type of hypervisors, or different type of public cloud services, or different type of storage.

The value proposition from VMTurbo with their products is the idea of adding an intelligence engine into the data center with not only a technical model but also with other models, including financial models. What I really have appreciate of those sessions were the animated conversations about the approach and the methods used to bring this value.

Interesting also their approach in the deployment of the tool: although it’s really simple and in 10 minutes if could start collecting significative data (mainly the step are download, configure and collect) and also if a fine tuning it’s not really necessary (default parameters are already fine) they usually run a webex session with the customer in order to explain some concepts and how better understand this tools and it’s values. It’s not difficult di deploy, but it’s not so easy use at its maximum power and capabilities without some right hints.

The best way to understand what value of VMTurbo Operations Manager is just try it: you can download the demo from the VMTurbo web site and start playing with it.

For more information see also:

- VMTurbo @#VFD3 (Tech Field Day 3 – Virtualization)

- VMTurbo video at #VFD3

- VMTurbo as a Market Economy

- VMTurbo – turbo charging your IT operations

Disclaimer: I’ve been invited to this event by Gestalt IT and they will paid for accommodation and travels, but I’m not compensated for my time and I’m not obliged to blog. Furthermore, the content is not reviewed, approved or published by any other person than me.